There’s no doubt that WebAssembly (AKA Wasm) is having a moment on the development stage. And while it may seem like a flash in the pan to some, we believe Wasm has a key role in continued containerized development. Docker and Wasm can be complementary technologies.

In the past, we’ve explored how Docker could successfully run Wasm modules alongside Linux or Windows containers. Nearly five months later, we’ve taken another big step forward with the Docker+Wasm Technical Preview. Developers need exceptional performance, portability, and runtime isolation more than ever before.

Chris Crone, a Director of Engineering at Docker, and Second State CEO, Founder Michael Yuan addressed these sticking points at the CNCF’s Wasm Day 2022. Here’s their full session, but feel free to stick around for our condensed breakdown:

You don’t need to learn new processes to develop successfully with Docker and Wasm. Popular Docker CLI commands can tackle this for you. Docker can even manage the WebAssembly runtime thanks to our collaboration with WasmEdge. We’ll dive into why we’re handling this new project and the technical mechanisms that make it possible.

Why WebAssembly and Docker?

How workloads and code are isolated has a major impact on how quickly we can deliver software to users. Chris highlights this by explaining how developers value:

- Easy reuse of components and defined interfaces across projects that help build value quicker

- Maximization of shared compute resources while maintaining safe, sturdy boundaries between workloads — lowering the cost of application delivery

- Seamless application delivery to users, in seconds, through convenient packaging mechanisms like container images so users see value quicker

We know that workload isolation plays a role in these things, yet there are numerous ways to achieve it — like air gapping, hardware virtualization, stack virtualization (Wasm or JVM), containerization, and so on. Since each has unique advantages and disadvantages, choosing the best solution can be tricky.

Finding the right tools can also be enormously difficult. The CNCF tooling landscape alone is saturated, and while we’re thankful these tools exist, the variety is overwhelming for many developers.

Chris believes that specialized tooling can conquer the task at hand. It’s also our responsibility at Docker to guide these tooling decisions. This builds upon our continued mission to help developers build, share, and run their applications as quickly as possible.

That’s where WasmEdge — and Michael Yuan — come in.

Exciting opportunities with Docker and WasmEdge

Michael showed there’s some overlap between container and WebAssembly use cases. For example, developers from both camps might want to ship microservice applications. Wasm can enable quicker startup times and code-level security, which are beneficial in many cases.

However, WebAssembly doesn’t fit every use case due to threading, garbage collection, and binary packaging limitations. Running applications with Wasm also requires extra tooling, currently.

WasmEdge in action: TensorFlow interface

Michael then kicked off a TensorFlow ML application demo to show what WasmEdge can do. This application wouldn’t work with other WASI-compatible runtimes:

A few things made this demo possible:

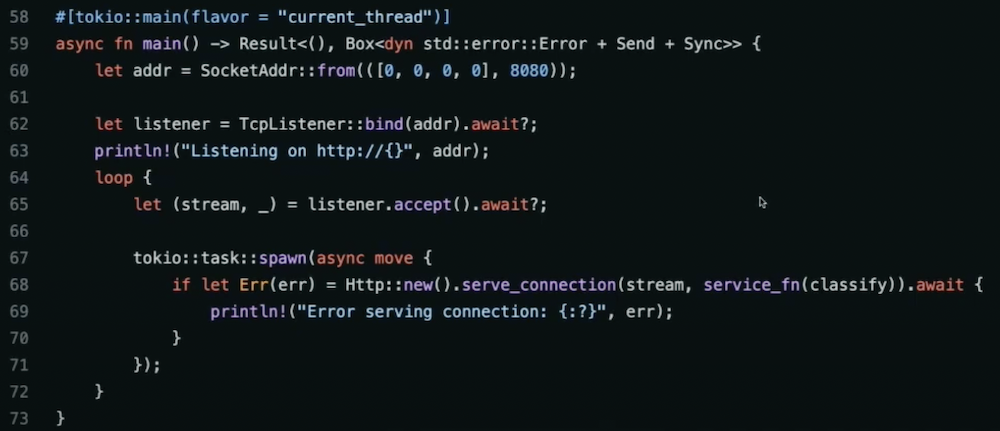

- Rust: a safe and fast programming language with first-class support for the Wasm compiling target.

- Tokio: a popular asynchronous runtime that can handle multiple, parallel HTTP requests without multithreading.

- WasmEdge’s TensorFlow: a plug-in compatible with the WASI-NN spec. Besides Tensorflow, PyTorch and OpenVINO are also supported in WasmEdge.

Note: Tokio and TensorFlow support are WasmEdge features that aren’t available on other WASI-compliant runtimes.

With Rust’s cargo build command, we can compile the program into a Wasm module using the wasm32-wasi target platform. The WasmEdge runtime can execute the resulting .wasm file. Once the application is running, we can perform HTTP queries to run some pretty cool image recognition tasks.

This example exemplifies the draw of WasmEdge as a WASI-compatible runtime. According to its maintainers, “WasmEdge is a lightweight, high-performance, and extensible WebAssembly runtime for cloud native, edge, and decentralized applications. It powers serverless apps, embedded functions, microservices, smart contracts, and IoT devices.”

Making Wasm accessible with Docker

Docker has two magical features. First, Docker and containers work on any machine and anywhere in production. Second, Docker makes it easy to build, share, and reuse components from any project. Container images and other OCI artifacts are easy to consume (and share). Isolation is baked in. Millions of developers are also very familiar with many Docker workflows like docker compose up.

Chris described how standardization and open ecosystems make Docker and container tooling available everywhere. The OCI specifications are crucial here and let us form new solutions that’ll work for nearly anyone and any supported technology (like Wasm).

On the other hand, setting up cross-platform Wasm developer environments is tricky. You also have to learn new tools and workflows — hampering productivity while creating frustration. We believe we can help developers overcome these challenges, and we’re excited to leverage our own platform to make Wasm more accessible.

Demoing Docker+WasmEdge

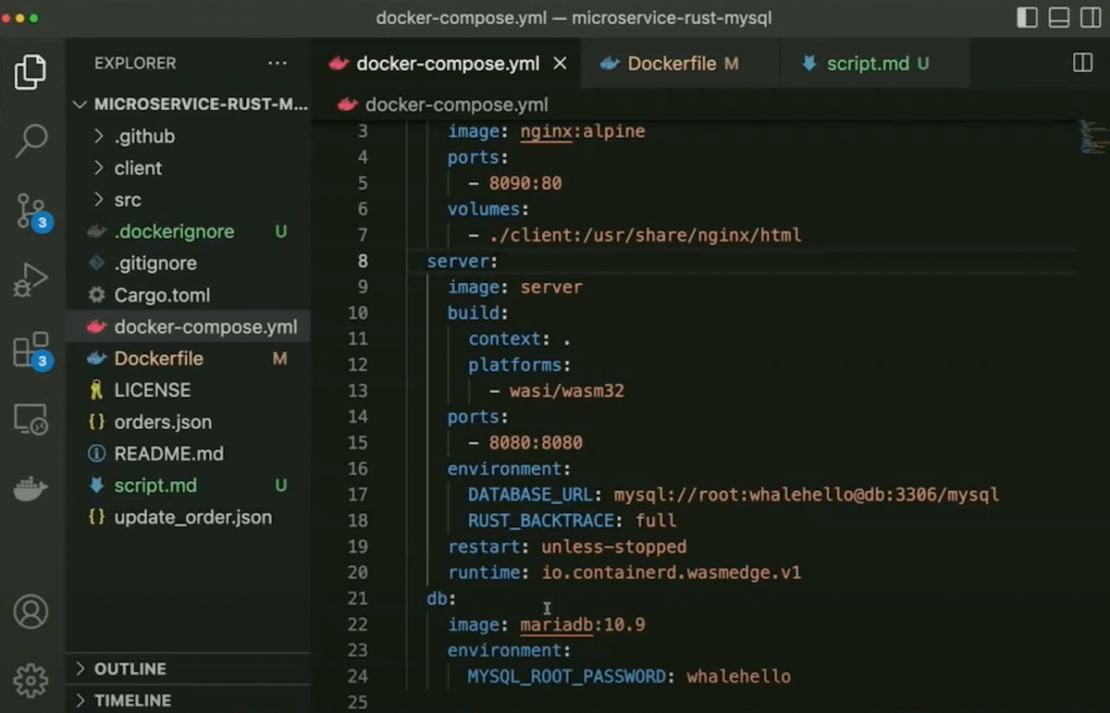

How does Wasm support look in practice? Chris fired up a demo using a preview of Docker Desktop, complete with WASI support. He created a Docker Compose file with three services:

- A frontend static JavaScript client using the NGINX Docker Official Image

- A Rust server compiled to

wasi/wasm32 - A MariaDB database

That Rust server runs as a Wasm Module, while the NGINX and MariaDB servers run in Linux containers. Chris built this Rust server using a Dockerfile that compiled from his local platform to a wasm32-wasi target. He also ran WasmEdge’s proprietary AOT compiler to optimize the built Wasm module. However, this step is optional and optimized modules require the WasmEdge runtime.

We’ll leave the nitty gritty to Chris (see 19:43 for the demo) for now. However, know that you can run a Compose build and come away with a wasi/wasm32 platform image. Running docker compose up launches your application which you can then interact with through your Web browser. This is one way to seamlessly run containers and Wasm side by side.

From the Docker CLI, you’ll see the Wasm microservice is less than 2MB. It contains a high-performance HTTP server and a MySQL database client. The NGINX and MariaDB servers are 10MB and 120MB, respectively. Alternatively, your Rust microservice would be tens of megabytes after building it into a Linux binary and running it in a Linux container. This underscores how lightweight Wasm images are.

Since the output is an OCI image, you can store or share it using an OCI-compliant registry like Docker Hub. You don’t have to learn complex new workflows. And while Chris and Michael centered on WasmEdge, Docker should support any WASI runtime.

The approach is interoperable with containers and has early support within Docker Desktop. Although Wasm might initially seem unfamiliar, integration with the Docker ecosystem immediately levels that learning curve.

The future of Docker and Wasm

As Chris mentioned, we’re invested in making Docker and Wasm work well together. Our recent Docker+Wasm Technical Preview is a major step towards boosting interoperability. However, we’re also thrilled to explore how Docker tooling can improve the lives of Wasm-hungry developers — no matter their goals.

Docker wants to get involved with the Wasm community to better understand how developers like you are building your WebAssembly applications. Your use cases and obstacles matter. By sharing our experiences with the container ecosystem with the community, we hope to accelerate Wasm’s growth and help you tackle that next big project.

Get started and learn more

Want to test run Docker and Wasm? Check out Chris’ GitHub page for links to special Wasm-compatible Docker Desktop builds, demo repos, and more. We’d also love to hear your feedback as we continue bolstering Docker+Wasm support!

Finally, don’t miss the chance to learn more about WebAssembly and microservices — alongside experts and fellow developers — at an upcoming meetup.