In the rapidly evolving era of machine learning (ML) and artificial intelligence (AI), TensorFlow has emerged as a leading framework for developing and implementing sophisticated models. With the introduction of TensorFlow.js, TensorFlow’s capability is boosted for JavaScript developers.

TensorFlow.js is a JavaScript machine learning toolkit that facilitates the creation of ML models and their immediate use in browsers or Node.js apps. TensorFlow.js has expanded the capability of TensorFlow into the realm of actual web development. A remarkable aspect of TensorFlow.js is its ability to utilize pretrained models, which opens up a wide range of possibilities for developers.

In this article, we will explore the concept of pretrained models in TensorFlow.js and Docker and delve into the potential applications and benefits.

Understanding pretrained models

Pretrained models are a powerful tool for developers because they allow you to use ML models without having to train them yourself. This approach can save a lot of time and effort, and it can also be more accurate than training your own model from scratch.

A pretrained model is an ML model that has been professionally trained on a large volume of data. Because these models have been trained on complex patterns and representations, they are incredibly effective and precise in carrying out specific tasks. Developers may save a lot of time and computing resources by using pretrained models because they can avoid having to train a model from scratch.

Types of pretrained models available

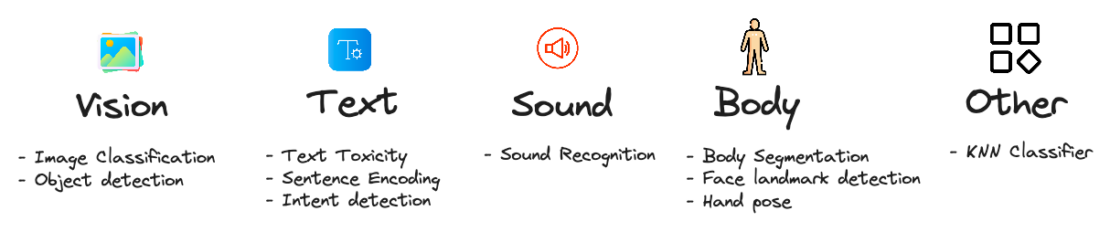

There is a wide range of potential applications for pretrained models in TensorFlow.js.

For example, developers could use them to:

- Build image classification models that can identify objects in images.

- Build natural language processing (NLP) models that can understand and respond to text.

- Build speech recognition models that can transcribe audio into text.

- Build recommendation systems that can suggest products or services to users.

TensorFlow.js and pretrained models

Developers can easily include pretrained models in their web applications using TensorFlow.js. With TensorFlow.js, you can benefit from robust machine learning algorithms without needing to be an expert in model deployment or training. The library offers a wide variety of pretrained models, including those for audio analysis, picture identification, natural language processing, and more (Figure 1).

How does it work?

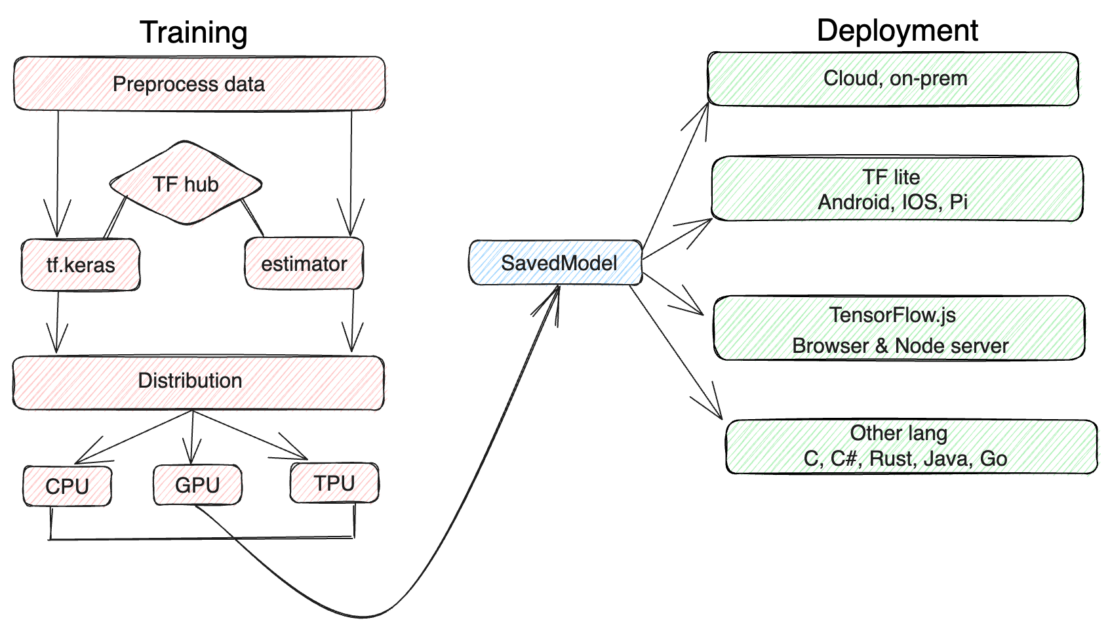

The module allows for the direct loading of models in TensorFlow SavedModel or Keras Model formats. Once the model has been loaded, developers can use its features by invoking certain methods made available by the model API. Figure 2 shows the steps involved for training, distribution, and deployment.

Training

The training section shows the steps involved in training a machine learning model. The first step is to collect data. This data is then preprocessed, which means that it is cleaned and prepared for training. The data is then fed to a machine learning algorithm, which trains the model.

- Preprocess data: This is the process of cleaning and preparing data for training. This includes tasks such as removing noise, correcting errors, and normalizing the data.

- TF Hub: TF Hub is a repository of pretrained ML models. These models can be used to speed up the training process or to improve the accuracy of a model.

- tf.keras: tf.keras is a high-level API for building and training machine learning models. It is built on top of TensorFlow, which is a low-level library for machine learning.

- Estimator: An estimator is a type of model in TensorFlow. It is a simplified way to build and train ML models.

Distribution

Distribution is the process of making a machine learning model available to users. This can be done by packaging the model in a format that can be easily shared, or by deploying the model to a production environment.

The distribution section shows the steps involved in distributing a machine learning model. The first step is to package the model. This means that the model is converted into a format that can be easily shared. The model is then distributed to users, who can then use it to make predictions.

Deployment

The deployment section shows the steps involved in deploying a machine learning model. The first step is to choose a framework. A framework is a set of tools that makes it easier to build and deploy machine learning models. The model is then converted into a format that can be used by the framework. The model is then deployed to a production environment, where it can be used to make predictions.

Benefits of using pretrained models

There are several pretrained models available in TensorFlow.js that can be utilized immediately in any project and offer the following notable advantages:

- Savings in time and resources: Building an ML model from scratch might take a lot of time and resources. Developers can skip this phase and use a model that has already learned from lengthy training by using pretrained models. The time and resources needed to implement machine learning solutions are significantly decreased as a result.

- State-of-the-art performance: Pretrained models are typically trained on huge datasets and refined by specialists, producing models that give state-of-the-art performance across a range of applications. Developers can benefit from these models’ high accuracy and reliability by incorporating them into TensorFlow.js, even if they lack a deep understanding of machine learning.

- Accessibility: TensorFlow.js makes pretrained models powerful for web developers, allowing them to quickly and easily integrate cutting-edge machine learning capabilities into their projects. This accessibility creates new opportunities for developing cutting-edge web-based solutions that make use of machine learning’s capabilities.

- Transfer learning: Pretrained models can also serve as the foundation for your process. Using a smaller, domain-specific dataset, developers can further train a pretrained model. Transfer learning enables models to swiftly adapt to particular use cases, making this method very helpful when data is scarce.

Why is containerizing TensorFlow.js important?

Containerizing TensorFlow.js brings several important benefits to the development and deployment process of machine learning applications. Here are five key reasons why containerizing TensorFlow.js is important:

- Docker provides a consistent and portable environment for running applications. By containerizing TensorFlow.js, you can package the application, its dependencies, and runtime environment into a self-contained unit. This approach allows you to deploy the containerized TensorFlow.js application across different environments, such as development machines, staging servers, and production clusters, with minimal configuration or compatibility issues.

- Docker simplifies the management of dependencies for TensorFlow.js. By encapsulating all the required libraries, packages, and configurations within the container, you can avoid conflicts with other system dependencies and ensure that the application has access to the specific versions of libraries it needs. This containerization eliminates the need for manual installation and configuration of dependencies on different systems, making the deployment process more streamlined and reliable.

- Docker ensures the reproducibility of your TensorFlow.js application’s environment. By defining the exact dependencies, libraries, and configurations within the container, you can guarantee that the application will run consistently across different deployments.

- Docker enables seamless scalability of the TensorFlow.js application. With containers, you can easily replicate and distribute instances of the application across multiple nodes or servers, allowing you to handle high volumes of user requests.

- Docker provides isolation between the application and the host system and between different containers running on the same host. This isolation ensures that the application’s dependencies and runtime environment do not interfere with the host system or other applications. It also allows for easy management of dependencies and versioning, preventing conflicts and ensuring a clean and isolated environment in which the application can operate.

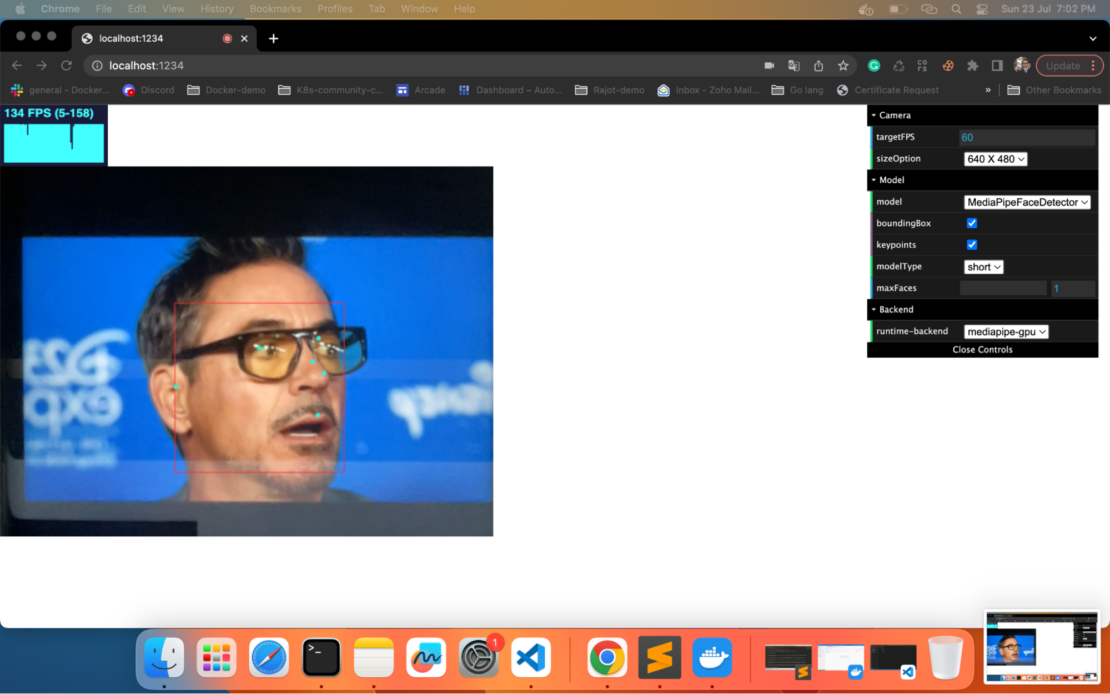

Building a fully functional ML face-detection demo app

By combining the power of TensorFlow.js and Docker, developers can create a fully functional machine learning (ML) face-detection demo app. Once the app is deployed, the TensorFlow.js model can recognize faces in real-time by leveraging the camera. However, with a minor code change, it’s possible for developers to build an app that allows users to upload images or videos to be detected.

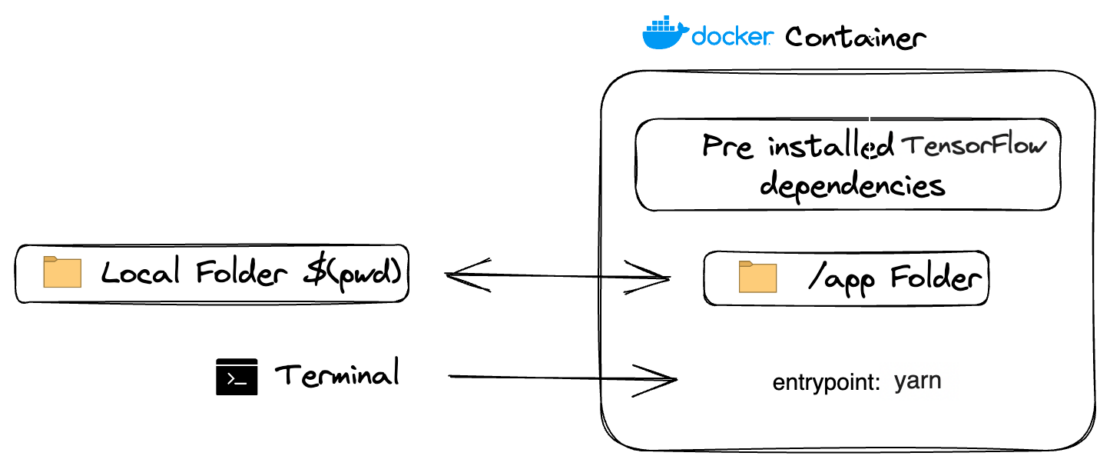

In this tutorial, you’ll learn how to build a fully functional face-detection demo app using TensorFlow.js and Docker. Figure 3 shows the file system architecture for this setup. Let’s get started.

Prerequisite

The following key components are essential to complete this walkthrough:

Deploying a ML face-detection app is a simple process involving the following steps:

- Clone the repository.

- Set up the required configuration files.

- Initialize TensorFlow.js.

- Train and run the model.

- Bring up the face-detection app.

We’ll explain each of these steps below.

Quick demo

If you’re in hurry, you can bring up the complete app by running the following command:

docker run -p 1234:1234 harshmanvar/face-detection-tensorjs:slim-v1

Open URL in browser: http://localhost:1234.

Getting started

Cloning the project

To get started, you can clone the repository by running the following command:

https://github.com/dockersamples/face-detection-tensorjs

We are utilizing the MediaPipe Face Detector demo for this demonstration. You first create a detector by choosing one of the models from SupportedModels, including MediaPipeFaceDetector.

For example:

const model = faceDetection.SupportedModels.MediaPipeFaceDetector;

const detectorConfig = {

runtime: 'mediapipe', // or 'tfjs'

}

const detector = await faceDetection.createDetector(model, detectorConfig);

Then you can use the detector to detect faces:

const faces = await detector.estimateFaces(image);

File: index.html:

<!DOCTYPE html>

<html>

<head>

<meta charset="utf-8">

<meta name="viewport" content="width=device-width,initial-scale=1,maximum-scale=1.0, user-scalable=no">

<style>

body {

margin: 0;

}

#stats {

position: relative;

width: 100%;

height: 80px;

}

#main {

position: relative;

margin: 0;

}

#canvas-wrapper {

position: relative;

}

</style>

</head>

<body>

<div id="stats"></div>

<div id="main">

<div class="container">

<div class="canvas-wrapper">

<canvas id="output"></canvas>

<video id="video" playsinline style="

-webkit-transform: scaleX(-1);

transform: scaleX(-1);

visibility: hidden;

width: auto;

height: auto;

">

</video>

</div>

</div>

</div>

</div>

</body>

<script src="https://cdnjs.cloudflare.com/ajax/libs/dat-gui/0.7.6/dat.gui.min.js"></script>

<script src="https://cdnjs.cloudflare.com/ajax/libs/stats.js/r16/Stats.min.js"></script>

<script src="src/index.js"></script>

</html>

The web application’s principal entry point is the index.html file. It includes the video element needed to display the real-time video stream from the user’s webcam and the basic HTML page structure. The relevant JavaScript scripts for the facial detection capabilities are also imported.

File: src/Index.js:

import '@tensorflow/tfjs-backend-webgl';

import '@tensorflow/tfjs-backend-webgpu';

import * as tfjsWasm from '@tensorflow/tfjs-backend-wasm';

tfjsWasm.setWasmPaths(

`https://cdn.jsdelivr.net/npm/@tensorflow/tfjs-backend-wasm@${

tfjsWasm.version_wasm}/dist/`);

import * as faceDetection from '@tensorflow-models/face-detection';

import {Camera} from './camera';

import {setupDatGui} from './option_panel';

import {STATE, createDetector} from './shared/params';

import {setupStats} from './shared/stats_panel';

import {setBackendAndEnvFlags} from './shared/util';

let detector, camera, stats;

let startInferenceTime, numInferences = 0;

let inferenceTimeSum = 0, lastPanelUpdate = 0;

let rafId;

async function checkGuiUpdate() {

if (STATE.isTargetFPSChanged || STATE.isSizeOptionChanged) {

camera = await Camera.setupCamera(STATE.camera);

STATE.isTargetFPSChanged = false;

STATE.isSizeOptionChanged = false;

}

if (STATE.isModelChanged || STATE.isFlagChanged || STATE.isBackendChanged) {

STATE.isModelChanged = true;

window.cancelAnimationFrame(rafId);

if (detector != null) {

detector.dispose();

}

if (STATE.isFlagChanged || STATE.isBackendChanged) {

await setBackendAndEnvFlags(STATE.flags, STATE.backend);

}

try {

detector = await createDetector(STATE.model);

} catch (error) {

detector = null;

alert(error);

}

STATE.isFlagChanged = false;

STATE.isBackendChanged = false;

STATE.isModelChanged = false;

}

}

function beginEstimateFaceStats() {

startInferenceTime = (performance || Date).now();

}

function endEstimateFaceStats() {

const endInferenceTime = (performance || Date).now();

inferenceTimeSum += endInferenceTime - startInferenceTime;

++numInferences;

const panelUpdateMilliseconds = 1000;

if (endInferenceTime - lastPanelUpdate >= panelUpdateMilliseconds) {

const averageInferenceTime = inferenceTimeSum / numInferences;

inferenceTimeSum = 0;

numInferences = 0;

stats.customFpsPanel.update(

1000.0 / averageInferenceTime, 120);

lastPanelUpdate = endInferenceTime;

}

}

async function renderResult() {

if (camera.video.readyState < 2) {

await new Promise((resolve) => {

camera.video.onloadeddata = () => {

resolve(video);

};

});

}

let faces = null;

if (detector != null) {

beginEstimateFaceStats();

try {

faces =

await detector.estimateFaces(camera.video, {flipHorizontal: false});

} catch (error) {

detector.dispose();

detector = null;

alert(error);

}

endEstimateFaceStats();

}

camera.drawCtx();

if (faces && faces.length > 0 && !STATE.isModelChanged) {

camera.drawResults(

faces, STATE.modelConfig.boundingBox, STATE.modelConfig.keypoints);

}

}

async function renderPrediction() {

await checkGuiUpdate();

if (!STATE.isModelChanged) {

await renderResult();

}

rafId = requestAnimationFrame(renderPrediction);

};

async function app() {

const urlParams = new URLSearchParams(window.location.search);

await setupDatGui(urlParams);

stats = setupStats();

camera = await Camera.setupCamera(STATE.camera);

await setBackendAndEnvFlags(STATE.flags, STATE.backend);

detector = await createDetector();

renderPrediction();

};

app();

JavaScript file that conducts the facial detection logic. TensorFlow.js is loaded, allowing for real-time face detection on the video stream using the pretrained face identification model. The file manages access to the camera, processing of the video frames, and creating bounding boxes around faces that have been recognized in the video feed.

File: src/camera.js:

import {VIDEO_SIZE} from './shared/params';

import {drawResults, isMobile} from './shared/util';

export class Camera {

constructor() {

this.video = document.getElementById('video');

this.canvas = document.getElementById('output');

this.ctx = this.canvas.getContext('2d');

}

static async setupCamera(cameraParam) {

if (!navigator.mediaDevices || !navigator.mediaDevices.getUserMedia) {

throw new Error(

'Browser API navigator.mediaDevices.getUserMedia not available');

}

const {targetFPS, sizeOption} = cameraParam;

const $size = VIDEO_SIZE[sizeOption];

const videoConfig = {

'audio': false,

'video': {

facingMode: 'user',

width: isMobile() ? VIDEO_SIZE['360 X 270'].width : $size.width,

height: isMobile() ? VIDEO_SIZE['360 X 270'].height : $size.height,

frameRate: {

ideal: targetFPS,

},

},

};

const stream = await navigator.mediaDevices.getUserMedia(videoConfig);

const camera = new Camera();

camera.video.srcObject = stream;

await new Promise((resolve) => {

camera.video.onloadedmetadata = () => {

resolve(video);

};

});

camera.video.play();

const videoWidth = camera.video.videoWidth;

const videoHeight = camera.video.videoHeight;

// Must set below two lines, otherwise video element doesn't show.

camera.video.width = videoWidth;

camera.video.height = videoHeight;

camera.canvas.width = videoWidth;

camera.canvas.height = videoHeight;

const canvasContainer = document.querySelector('.canvas-wrapper');

canvasContainer.style = `width: ${videoWidth}px; height: ${videoHeight}px`;

camera.ctx.translate(camera.video.videoWidth, 0);

camera.ctx.scale(-1, 1);

return camera;

}

drawCtx() {

this.ctx.drawImage(

this.video, 0, 0, this.video.videoWidth, this.video.videoHeight);

}

drawResults(faces, boundingBox, keypoints) {

drawResults(this.ctx, faces, boundingBox, keypoints);

}

}

The configuration for the camera’s width, audio, and other setup-related items is managed in camera.js.

File: .babelrc:

The .babelrc file is used to configure Babel, a JavaScript compiler, specifying presets and plugins that define the transformations to be applied during code transpilation.

shared % tree

.

├── option_panel.js

├── params.js

├── stats_panel.js

└── util.js

1 directory, 4 files

The parameters and other shared files found in the src/shared folder are needed to run and access the camera, checks, and parameter values.

Defining services using a Compose file

Here’s how our services appear within a Docker Compose file:

services:

tensorjs:

#build: .

image: harshmanvar/face-detection-tensorjs:v2

ports:

- 1234:1234

volumes:

- ./:/app

- /app/node_modules

command: watch

Your sample application has the following parts:

- The

tensorjsservice is based on the harshmanvar/face-detection-tensorjs:v2 image. - This image contains the necessary dependencies and code to run a face detection system using TensorFlow.js.

- It exposes port 1234 to communicate with the TensorFlow.js.

- The volume

./:/appsets up a volume mount, linking the current directory (represented by./) on the host machine to the/appdirectory within the container. This allows you to share files and code between your host machine and the container. - The

watchcommand specifies the command to run within the container. In this case, it runs thewatchcommand, which suggests that the face detection system will continuously monitor for changes or updates.

Building the image

It’s time to build the development image and install the dependencies to launch the face-detection model.

docker build -t tensor-development:v1

Running the container

docker run -p 1234:1234 -v $(pwd):/app -v /app/node_modules tensor-development:v1 watch

Bringing up the container services

You can launch the application by running the following command:

docker compose up -d

Then, use the docker compose ps command to confirm that your stack is running correctly. Your terminal will produce the following output:

docker compose ps

NAME IMAGE COMMAND SERVICE STATUS PORTS

tensorflow tensorjs:v2 "yarn watch" tensorjs Up 48 seconds 0.0.0.0:1234->1234/tcp

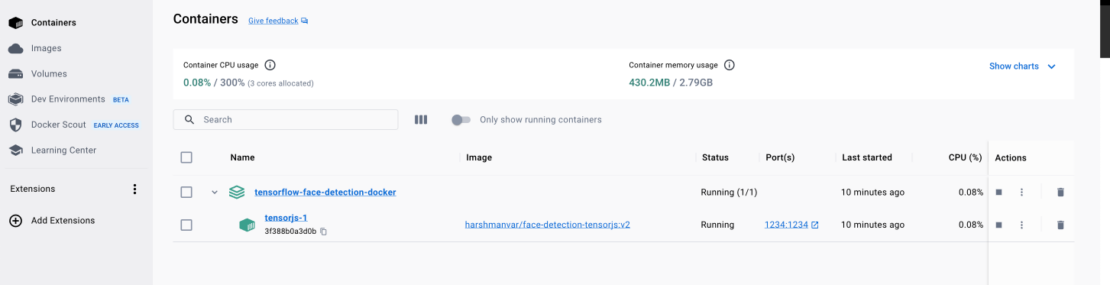

Viewing the containers via Docker Dashboard

You can also leverage the Docker Dashboard to view your container’s ID and easily access or manage your application (Figure 5) container.

Conclusion

Well done! You have acquired the knowledge to utilize a pre-trained machine learning model with JavaScript for a web application, all thanks to TensorFlow.js. In this article, we have demonstrated how Docker Compose lets you quickly create and deploy a fully functional ML face-detection demo app, using just one YAML file.

With this newfound expertise, you can now take this guide as a foundation to build even more sophisticated applications with just a few additional steps. The possibilities are endless, and your ML journey has just begun!

Learn more

- Get the latest release of Docker Desktop.

- Vote on what’s next! Check out our public roadmap.

- Have questions? The Docker community is here to help.

- New to Docker? Get started.