This post was contributed by Sophia Parafina.

Keeping pace with the rapid advancements in artificial intelligence can be overwhelming. Every week, new Large Language Models (LLMs), vector databases, and innovative techniques emerge, potentially transforming the landscape of AI/ML development. Our extensive collaboration with developers has uncovered numerous creative and effective strategies to harness Docker in AI development.

This quick guide shows how to use Docker to containerize llamafile, an executable that brings together all the components needed to run a LLM chatbot with a single file. This guide will walk you through the process of containerizing llamafile and having a functioning chatbot running for experimentation.

Llamafile’s concept of bringing together LLMs and local execution has sparked a high level of interest in the GenAI space, as it aims to simplify the process of getting a functioning LLM chatbot running locally.

Containerize llamafile

Llamafile is a Mozilla project that runs open source LLMs, such as Llama-2-7B, Mistral 7B, or any other models in the GGUF format. The Dockerfile builds and containerizes llamafile, then runs it in server mode. It uses Debian trixie as the base image to build llamafile. The final or output image uses debian:stable as the base image.

To get started, copy, paste, and save the following in a file named Dockerfile.

# Use debian trixie for gcc13

FROM debian:trixie as builder

# Set work directory

WORKDIR /download

# Configure build container and build llamafile

RUN mkdir out && \

apt-get update && \

apt-get install -y curl git gcc make && \

git clone https://github.com/Mozilla-Ocho/llamafile.git && \

curl -L -o ./unzip https://cosmo.zip/pub/cosmos/bin/unzip && \

chmod 755 unzip && mv unzip /usr/local/bin && \

cd llamafile && make -j8 LLAMA_DISABLE_LOGS=1 && \

make install PREFIX=/download/out

# Create container

FROM debian:stable as out

# Create a non-root user

RUN addgroup --gid 1000 user && \

adduser --uid 1000 --gid 1000 --disabled-password --gecos "" user

# Switch to user

USER user

# Set working directory

WORKDIR /usr/local

# Copy llamafile and man pages

COPY --from=builder /download/out/bin ./bin

COPY --from=builder /download/out/share ./share/man

# Expose 8080 port.

EXPOSE 8080

# Set entrypoint.

ENTRYPOINT ["/bin/sh", "/usr/local/bin/llamafile"]

# Set default command.

CMD ["--server", "--host", "0.0.0.0", "-m", "/model"]

To build the container, run:

docker build -t llamafile .

Running the llamafile container

To run the container, download a model such as Mistral-7b-v0.1. The example below saves the model to the model directory, which is mounted as a volume.

$ docker run -d -v ./model/mistral-7b-v0.1.Q5_K_M.gguf:/model -p 8080:8080 llamafile

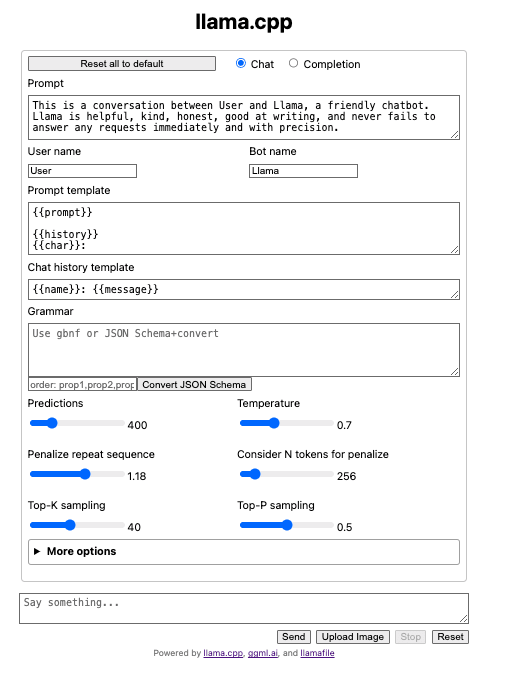

The container will open a browser window with the llama.cpp interface (Figure 1).

$ curl -s http://localhost:8080/v1/chat/completions -H "Content-Type: application/json" -d '{

"model": "gpt-3.5-turbo",

"messages": [

{

"role": "system",

"content": "You are a poetic assistant, skilled in explaining complex programming concepts with creative flair."

},

{

"role": "user",

"content": "Compose a poem that explains the concept of recursion in programming."

}

]

}' | python3 -c '

import json

import sys

json.dump(json.load(sys.stdin), sys.stdout, indent=2)

print()

'

Llamafile has many parameters to tune the model. You can see the parameters with man llama file or llama file --help. Parameters can be set in the Dockerfile CMD directive.

Now that you have a containerized llamafile, you can run the container with the LLM of your choice and begin your testing and development journey.

What’s next?

To continue your AI development journey, read the Docker GenAI guide, review the additional AI content on the blog, and check out our resources.

Learn more

- Read the Docker AI/ML blog post collection.

- Download the Docker GenAI guide.

- Read the Llamafile announcement post on Mozilla.org.

- Subscribe to the Docker Newsletter.

- Have questions? The Docker community is here to help.

- New to Docker? Get started.