At DockerCon 2023, with partners Neo4j, LangChain, and Ollama, we announced a new GenAI Stack. We have brought together the top technologies in the generative artificial intelligence (GenAI) space to build a solution that allows developers to deploy a full GenAI stack with only a few clicks.

Here’s what’s included in the new GenAI Stack:

1. Pre-configured LLMs: We provide preconfigured Large Language Models (LLMs), such as Llama2, GPT-3.5, and GPT-4, to jumpstart your AI projects.

2. Ollama management: Ollama simplifies the local management of open source LLMs, making your AI development process smoother.

3. Neo4j as the default database: Neo4j serves as the default database, offering graph and native vector search capabilities. This helps uncover data patterns and relationships, ultimately enhancing the speed and accuracy of AI/ML models. Neo4j also serves as a long-term memory for these models.

4. Neo4j knowledge graphs: Neo4j knowledge graphs to ground LLMs for more precise GenAI predictions and outcomes.

5. LangChain orchestration: LangChain facilitates communication between the LLM, your application, and the database, along with a robust vector index. LangChain serves as a framework for developing applications powered by LLMs. This includes LangSmith, an exciting new way to debug, test, evaluate, and monitor your LLM applications.

6. Comprehensive support: To support your GenAI journey, we provide a range of helpful tools, code templates, how-to guides, and GenAI best practices. These resources ensure you have the guidance you need.

Conclusion

The GenAI Stack simplifies AI/ML integration, making it accessible to developers. Docker’s commitment to fostering innovation and collaboration means we’re excited to see the practical applications and solutions that will emerge from this ecosystem. Join us as we make AI/ML more accessible and straightforward for developers everywhere.

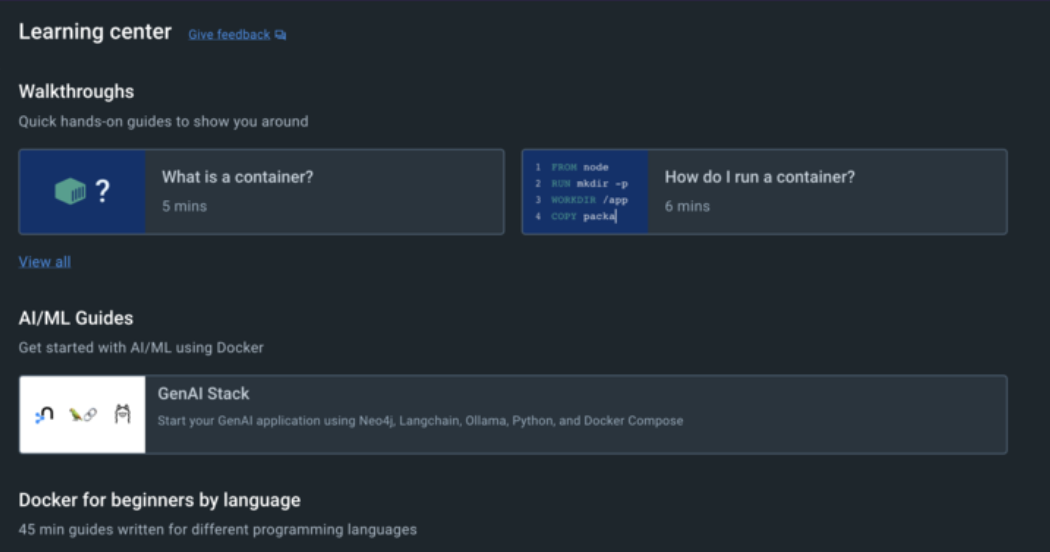

The GenAI Stack is available in Early Access now and is accessible from the Docker Desktop Learning Center or on GitHub.

Participate in our Docker Docker AI/ML Hackathon to show off your most creative AI/ML solutions built on Docker. Read our blog post “Announcing Docker AI/ML Hackathon” to learn more.

At DockerCon 2023, Docker also announced its first AI-powered product, Docker AI. Sign up now for early access to Docker AI.

Learn more

- Find the GenAI Stack on GitHub.

- Join the Docker Docker AI/ML Hackathon.

- Sign up now for early access to Docker AI.

- Get the latest release of Docker Desktop.

- Vote on what’s next! Check out our public roadmap.

- Have questions? The Docker community is here to help.

- New to Docker? Get started.