At DockerCon 2023, we announced our intention to use OpenPubkey, a project jointly developed by BastionZero and Docker and recently open-sourced and donated to the Linux Foundation, as part of our signing solution for Docker Official Images (DOI). We provided a detailed description of our signing approach in the DockerCon talk “Building the Software Supply Chain on Docker Official Images.”

In this post, we walk you through the updated DOI signing strategy. We start with how basic container image signing works and gradually build up to what is currently a common image signing flow, which involves public/private key pairs, certificate authorities, the Update Framework (TUF), timestamp logs, transparency logs, and identity verification using Open ID Connect.

After describing these mechanics, we show how OpenPubkey, with a few recent enhancements included, can be leveraged to smooth the flow and decrease the number of third-party entities the verifier is required to trust.

Hopefully, this incremental narrative will be useful to those new to software artifact signing and those just looking for how this proposal differs from current approaches. As always, Docker is committed to improving the developer experience, increasing the time developers spend on adding value, and decreasing the amount of time they spend on toil.

The approach described in this post aims to allow Docker users to improve the security of their software supply chain by making it easier to verify the integrity and origin of the DOI images they use every day.

Signing container images

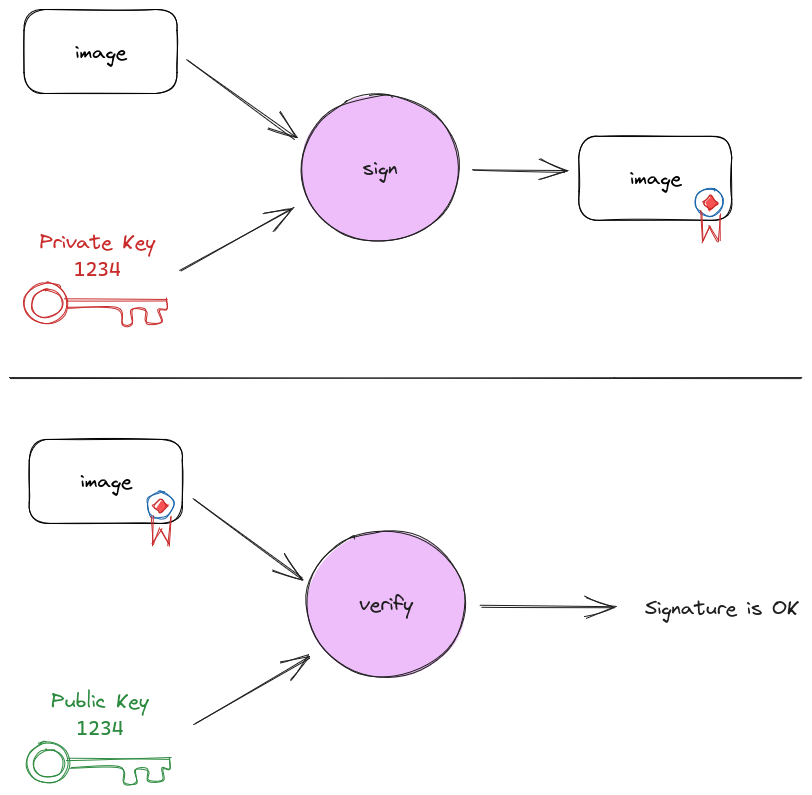

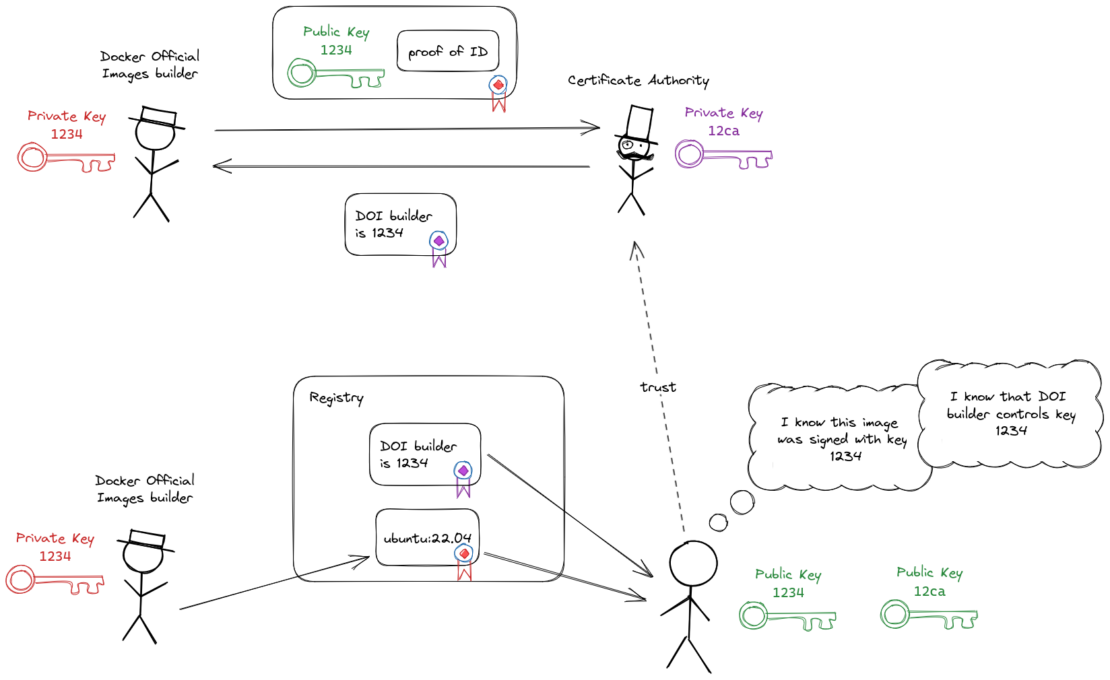

An entity can prove that it built a container image by creating a digital signature and adding it to the image. This process is called signing. To sign an image, the entity can create a public/private key pair. The private key must be kept secret, and the public key can be shared publicly.

When an image is signed, a signature is produced using the private key and the digest of the image. Anyone with the public key can then validate that the signature was created by someone who has the private key (Figure 1).

Let’s walk through how container images can be signed, starting with a naive approach, building up to the current status quo in image signing, and ending with Docker’s proposed solution. We’ll use signing Docker Official Images (DOI) as part of the DOI build process as our example since that is the use case for which this solution has been designed.

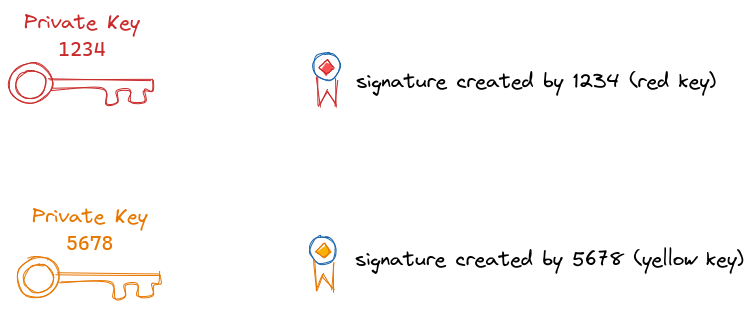

In the diagrams throughout this post, we’ll use colored seals to represent signatures. The color of the seal matches the color of the private key it was signed with (Figure 2).

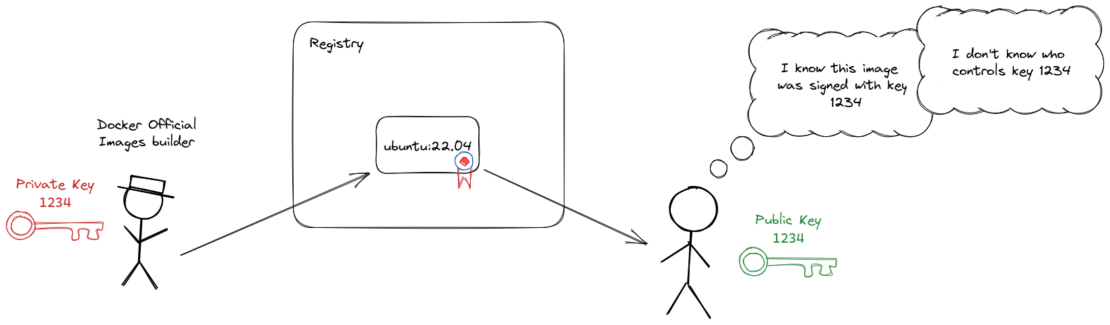

Note that all the verifier knows after verifying an image signature with a public key is that the image was signed with the private key associated with the public key. To trust the image, the verifier must verify the signature and the identity of the key pair owner (Figure 3).

Identity and certificates

How do you verify the owner of a public/private key pair? That is the purpose of a certificate, a simple data structure including a public key and a name. The certificate binds the name, known as the subject, to the public key. This data structure is normally signed by a Certificate Authority (CA), known as the issuer of the certificate.

Certificates can be distributed alongside signatures that were made with the corresponding key. This means that consumers of images don’t need to verify the owner of every public key used to sign any image. They can instead rely on a much smaller set of CA certificates. This is analogous to the way web browsers have a set of a few dozen root CA certificates to establish trust with a myriad of websites using HTTPS.

Going back to the example of DOI signing, if we distribute a certificate binding the 1234 public key with the Docker Official Images (DOI) builder name, anybody can verify that an image signed by the 1234 private key was signed by the DOI builder, as long as they trust the CA that issued the certificate (Figure 4).

Trust policy

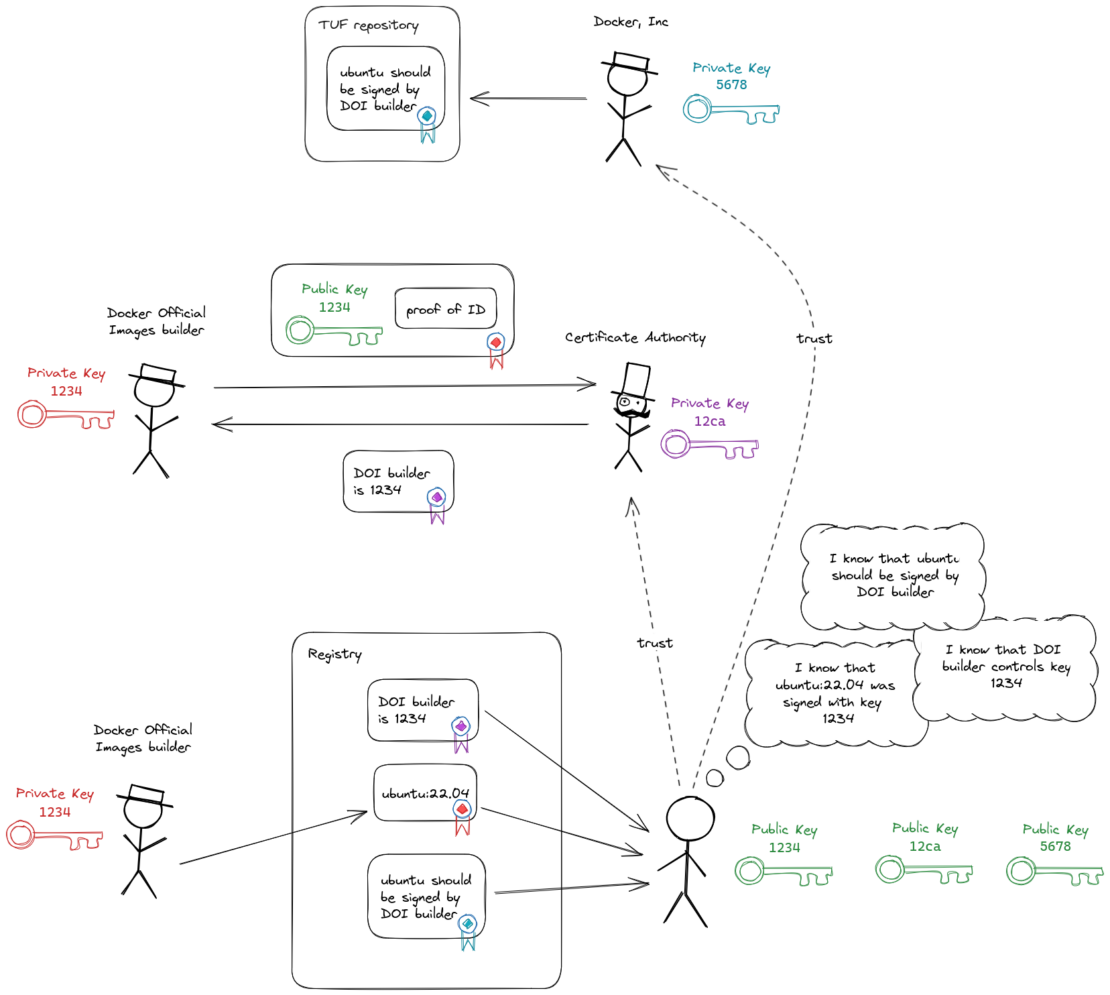

Certificates solve the problem of which public keys belong to which entities, but how do we know which entity was supposed to sign an image? For this, we need trust policy, some signed metadata detailing which entities are allowed to sign an image. For Docker Official Images, trust policy will state that our DOI build servers must sign the images.

We need to ensure that trust policy is updated in a secure way, because if a malicious party can change a policy, then they can trick clients into believing the malicious party’s keys are allowed to sign images they otherwise should not be allowed to sign. To ensure secure trust policy updates, we will use The Update Framework (TUF) (specification), a mechanism for securely distributing updates to arbitrary files.

A TUF repository uses a hierarchy of keys to sign manifests of files in a repository. File indexes, called manifests, are signed with keys that are kept online to enable automation, and the online signing keys are signed with offline root keys. This enables the repository to be recovered in case of online key compromise.

A client that wants to download an update to a file in a TUF repository must first retrieve the latest copy of the signed manifests and make sure the signatures on the manifests are verified. Then they can retrieve the actual files.

Once a TUF repository has been created, it can be distributed by any means we choose, even if the distribution mechanism is not trusted. We will distribute it using the Docker Hub registry (Figure 5).

Certificate expiry and timestamping

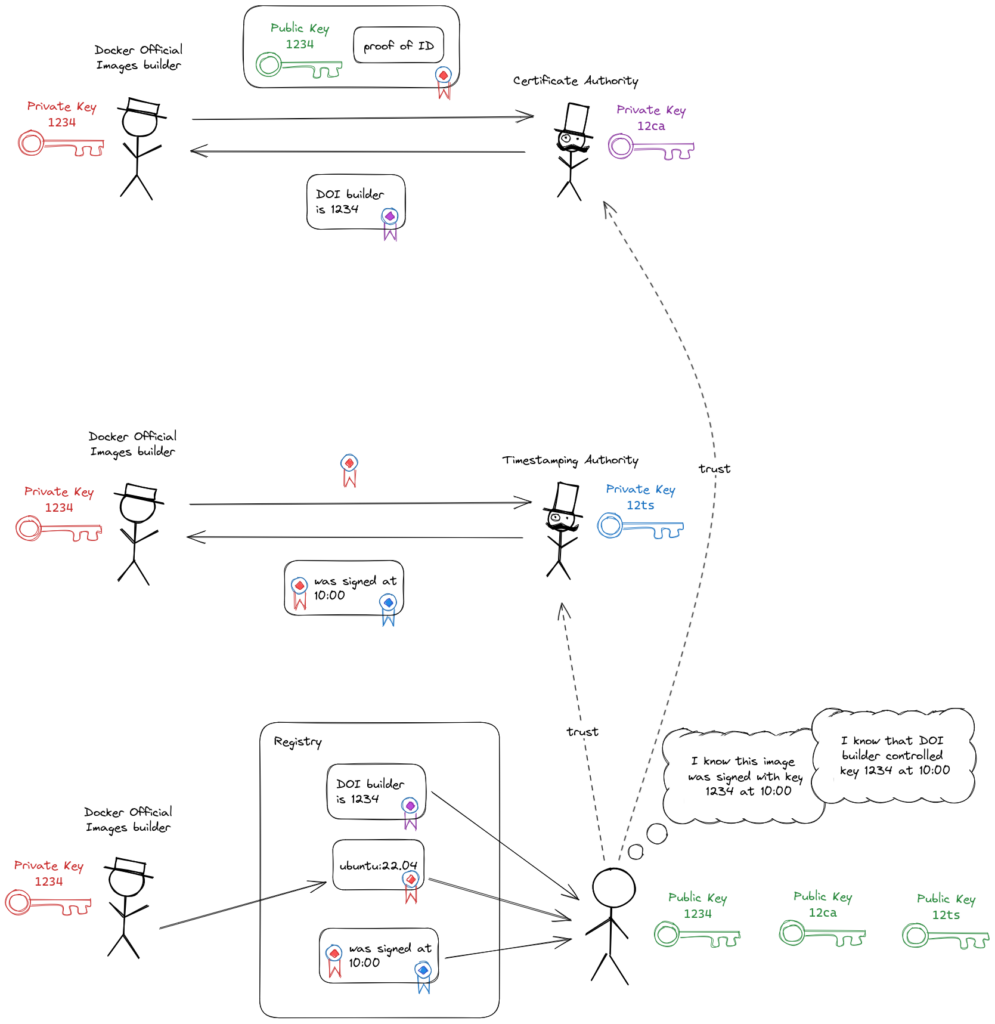

In the preceding section, we described a certificate as simply a binding from an identity to a public key. In reality, certificates do contain some additional data. One important detail is the expiry time. Usually, certificates should not be trusted after their expiry time. Signatures on images (as in Figure 5) will only be valid until the attached certificate’s expiry time. A limited life span for a signature isn’t desirable because we want images to be long-lasting (longer-lasting than a certificate).

This problem can be solved by using a Timestamp Authority (TSA). A TSA will receive some data, bundle the data with the current time, and sign the bundle before returning it. Using a TSA allows anybody who trusts the TSA to verify that the data existed at the bundled time.

We can send the signature to a TSA and have it bundle the current timestamp with the signature. Then, we can use the bundled timestamp as the ‘current time’ when verifying the certificate. The timestamp proves that the certificate had not expired at the time the signature was created. The TSA’s certificate will also expire, at which point all of the signed timestamps they’ve created will also expire. TSA certificates typically last for a long time (10+ years)(Figure 6).

OpenID Connect

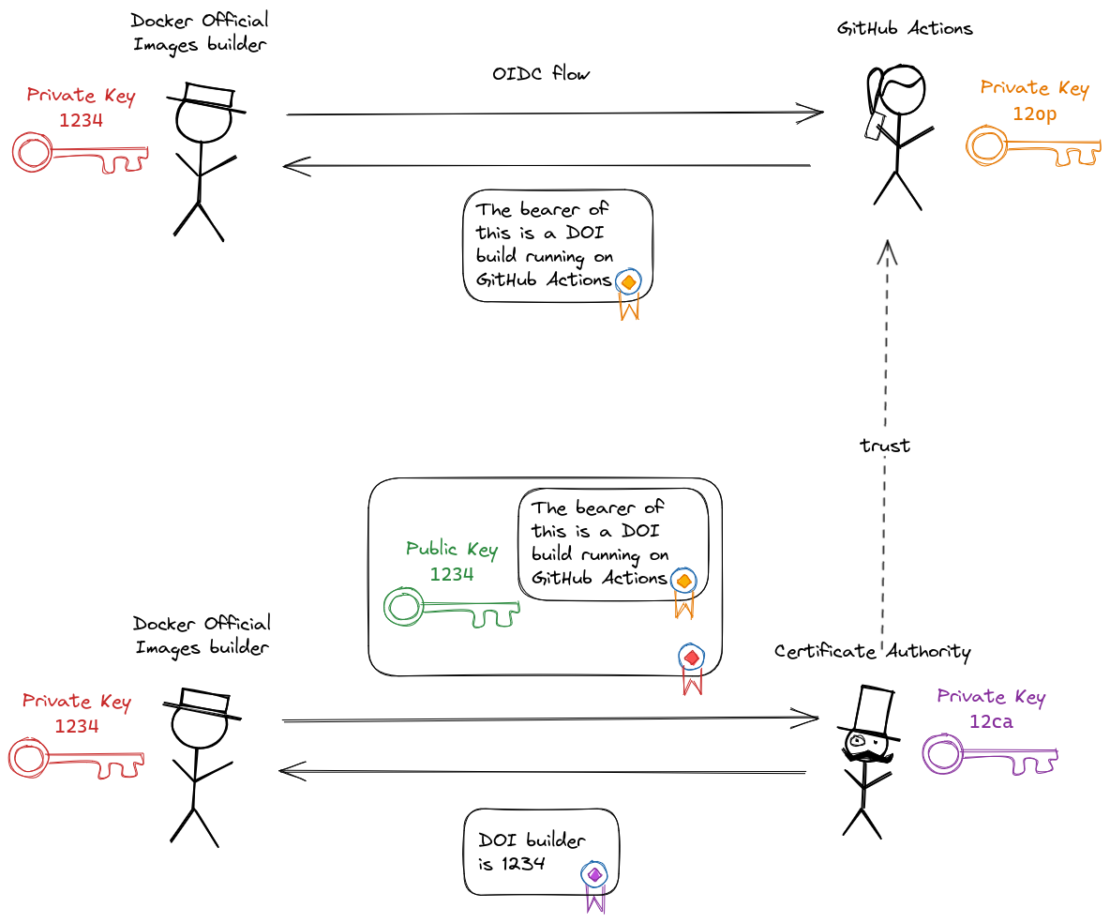

Thus far, we’ve ignored how the CA verifies the signer’s identity (the “proof of ID” box in the preceding diagrams). How this verification works depends on the CA, but one approach is to outsource this verification to a third-party using OpenID Connect (OIDC).

We won’t describe the entire OIDC flow, but the primary steps are:

- The signer authenticates with the OIDC provider (e.g., Google, GitHub, or Microsoft).

- The OIDC provider issues an ID token, which is a signed token that the signer can use to prove their identity.

- The ID token includes an audience, which specifies the intended party that should use the ID token to verify the identity of the signer. The intended audience will be the Certificate Authority. The ID token must be rejected by any other audience.

The CA must trust the OIDC provider and understand how to verify the ID token’s audience claim.

OIDC ID tokens are signed using the OIDC provider’s private key. The corresponding public key is distributed from a discoverable HTTP endpoint hosted by the OIDC provider.

Signed DOI will be built using GitHub Actions, and GitHub Actions can automatically authenticate build processes with the GitHub Actions OIDC provider, making ID tokens available to build processes (Figure 7).

Key compromise

We mentioned at the start of this post that the private keys must be kept private for the system to remain secure. If the signer’s private key becomes compromised, a malicious party can create signatures that can be verified as being signed by the signer.

Let’s walk through a few ways to mitigate the risk of these keys becoming compromised.

Ephemeral keys

A nice way to reduce the risk of compromise of private keys is to not store them anywhere. Key pairs can be generated in memory, used once, and then the private key can be discarded. This means that certificates are also single-use, and a new certificate must be requested from the CA every time a signature is created.

Transparency logging

Ephemeral keys work well for the signing keys themselves, but there are other things that can be compromised:

- The CA’s private key (practically, this cannot be ephemeral)

- The OIDC provider’s private key (practically, this cannot be ephemeral)

- The OIDC account credentials

These keys/credentials must be kept private, but in case of an accidental compromise, we need to have a way to detect misuse. In this situation, a transparency log (TL) can help.

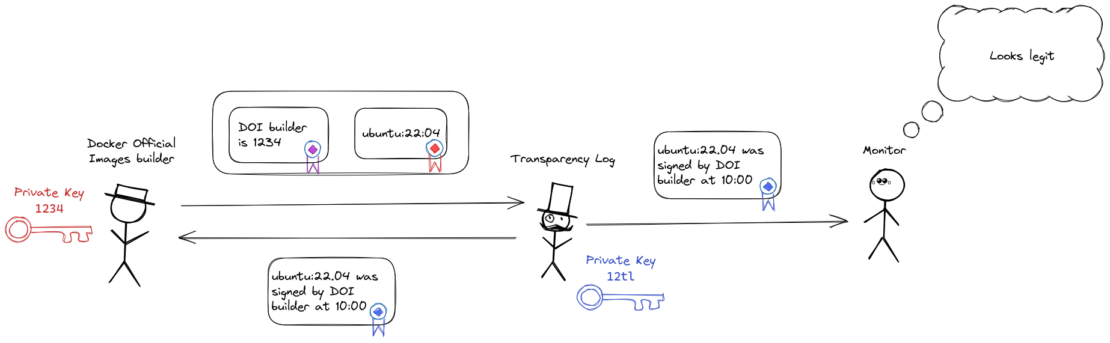

A transparency log is an append-only tamperproof data store. When data is written to the log, a signed receipt is returned by the operator of the log, which can be used as proof that it is contained in the log. The log can also be monitored to check for suspicious activity.

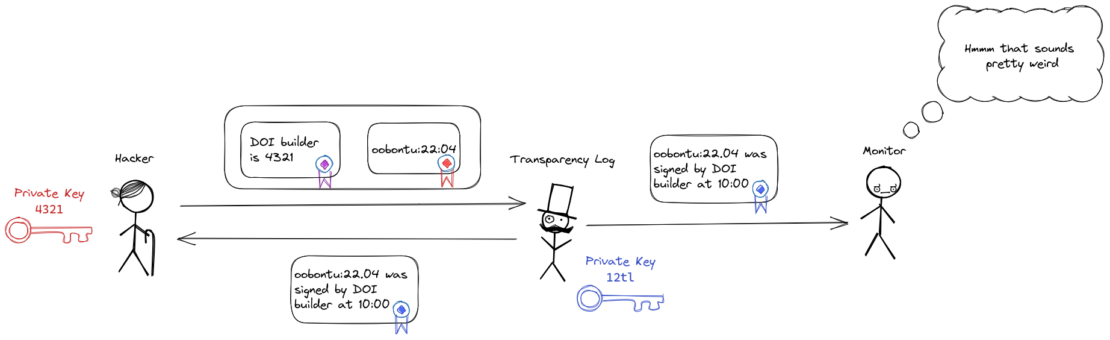

We can use a transparency log to store all signatures and bundle the TL receipt with the signature. We can only accept a signature as valid if the signature is bundled with a valid TL receipt. Because a signature will only be valid if an entry is in the TL, any malicious party creating fake signatures will also have to publish an entry to the TL. The TL can be monitored by the signer, who can sound the alarm if they notice any signatures in the log they didn’t create (Figure 8). The log can also be monitored by concerned third parties to check for any signatures that don’t look right (Figure 9).

We can also use a transparency log to store certificates issued by the CA. A certificate will only be valid if it comes with a TL receipt. This is also how TLS certificates work — they will only be trusted by browsers if they have an attached TL receipt.

The TL receipts also contain a timestamp, so a TL can completely replace the role of the TSA while also providing extra functionality.

Similar attacks with a stolen private key and a legitimate certificate are also detectable in this way.

A summary of the signing status quo

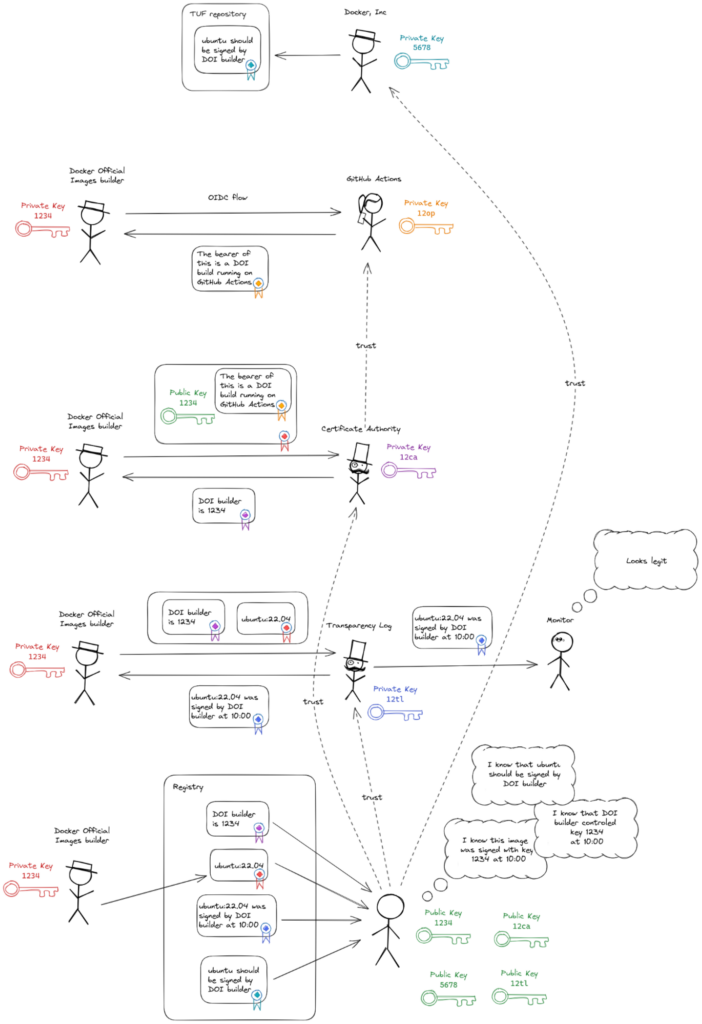

Everything up to this point describes the status quo in artifact signing. Let’s pull together all of the components described so far to recap (Figure 10). These are:

- OIDC provider, to verify the identity of some entity

- Certificate authority, to issue certificates binding the identity to a public key

- Signer, to sign an image with the corresponding private key

- Transparency log (TL), to store signatures and return signed timestamped receipts

- TUF repository, to distribute trust policy

- Transparency log monitors, to detect malicious behavior

- Registry, to store all of the artifacts

- Client, to verify signatures on images

The client verifying a signature needs to trust:

- The CA

- The TL

- The OIDC provider (transitively, they need to trust that the CA verifies ID tokens from the OIDC provider correctly)

- The signers of the TUF repository

There are many things to trust. Any of these entities being compromised or acting maliciously themselves will compromise the security of the system. Even if such a compromise can be detected by monitoring the transparency log, remediation can be difficult. Removing any of these points of trust without compromising the overall security of the solution would be an improvement.

Docker’s proposed signing solution

Before a CA issues a certificate, it needs to verify control of the private key and control of the identity. In Figure 10, the CA outsources the identity verification to an OIDC provider. We can already use the OIDC provider to verify the identity, but can we use it to verify control of the private key? It turns out that we can.

OpenPubkey is a protocol for binding OIDC identities to public keys. Full details of how it works can be found in the OpenPubkey paper, but below is a simplified explanation.

OIDC recommends a unique random number to be sent as part of the request to the OIDC provider. This number is called a nonce.

If the nonce is sent, the OIDC provider must return it in the signed JWT (JSON Web Token) called an ID token. We can use this to our advantage by constructing the nonce as a hash of the signer’s public key and some random noise (as the nonce still has to be random). The signer can then bundle the ID token from the OIDC provider with the public key and the random noise and sign the bundle with its private key.

The resulting token (called a PK token) proves control of the OIDC identity and control of the private key at a specific time, as long as a verifier trusts the OIDC provider. In other words, the PK token fulfills the same role as the certificate provided by the CA in all the signing flows up to this point, but does not require trust in a CA. This token can be distributed alongside signatures in the same way as a certificate.

OIDC ID tokens, however, are designed to be verified and discarded in a short timeframe. The public keys for verifying the tokens are available from an API endpoint hosted by the OIDC provider. These keys are rotated frequently (every few weeks or months), and there is currently no way to verify a token signed by a key that is no longer valid. Therefore, a log of historic keys will need to be used to verify PK tokens that were signed with OIDC provider keys that have been rotated out. This log is an additional point of trust for a verifier, so it may seem we’ve removed one point of trust (the CA) and replaced it with another (the log of public keys). For DOI, we have already added another point of trust with the TUF repository used to distribute trust policy. We can also use this TUF repository to distribute the log of public keys.

OpenPubkey enhancements

As originally formulated, OpenPubkey was not designed to support code signing workflows as we’ve described. As a result, the implementation described here has a few drawbacks. In the following, we discuss each drawback and its associated solution.

OIDC ID tokens are bearer auth tokens

An OIDC ID token is a JWT signed by the OIDC provider that allows the bearer of the token to authenticate as the subject of the token. As we will be publishing these tokens publicly, it means a malicious party could take a valid ID token from the registry and present it to a service to identify as the subject of the ID token.

In theory, this should not be a problem because, according to the OIDC spec, any consumer must check the audience in the ID token before trusting the token (i.e., if the token is presented to Service Foo, Service Foo must check that the token was intended for Service Foo by checking the audience claim). However, there have been issues with OIDC client libraries not making this check.

To solve this issue, we can remove the OIDC provider’s signature from the ID token and replace it with a Guillou-Quisquater (GQ) signature. This GQ signature allows us to prove that we had the OIDC provider’s signature without sharing the signed token, and this proof can be verified using the OIDC provider’s public key and the rest of the ID token. More information on GQ signatures can be found in the original paper and in the OpenPubkey reference implementation. We’ve used a similar approach to one discussed in a paper by Zachary Newman.

OIDC ID tokens can contain personal information

For the case where OIDC ID tokens from CI systems such as GitHub Actions are used, it is unlikely that there is any personal information that could be leaked in the token. For example, the full data made available in a GitHub Actions OIDC ID token is documented on GitHub.

Some of this data, such as the repository name and the Git commit digest, are already included in the unsigned provenance attestations that the Docker build process generates. ID tokens representing human identities may include more personal data, but arguably, this is also the kind of data consumers may wish to verify as part of trust policy.

Key compromise

If the signer’s private key is compromised (admittedly unlikely as this is an ephemeral key), it is trivial for an attacker to sign any images and combine the signatures with the public PK token. As mentioned previously, the transparency log can help detect this kind of compromise, but we can go further and prevent it in the first place.

In the original OpenPubkey flow, we create the nonce from the signer’s public key and random noise, then use the corresponding private key to sign the image. If, however, we also include the hash of the image in the nonce, then the image, which we have already signed, is in effect also signed by the OIDC provider. This means the PK token becomes a one-use token that cannot be replayed to sign other images. Thus, compromising the ephemeral private key is no longer useful to an attacker.

OpenPubkey uses the nonce claim in the ID token

The full OIDC flow isn’t available on GitHub Actions. Instead, a simple HTTP endpoint is provided where a build process can request an ID token with an optional audience (aud) claim. We need to get the OIDC provider to sign some arbitrary data during authentication. We can do this by sending some data to the OIDC provider which will end up in one of the ID token claims, as long as we’re not preventing the claim’s intended use. Because GitHub Actions allows us to set the aud claim to an arbitrary value, we can use it for this purpose.

What’s next?

Docker aims to enable the broader open source community to improve security across the entire software supply chain. We feel strongly that good security requires good, easy-to-use tooling. Or, as Founder and CEO of Bounce Security Avi Douglen more eloquently put it, “Security at the expense of usability comes at the expense of security.”

The approach explained in this post aims to make signing container images as easy as possible without sacrificing security and trust. By simplifying the overall approach and eliminating complicated infrastructure requirements, our goal is to foster widespread adoption of container signing, in the same way we enabled the widespread adoption of Linux containers a decade ago.

Open source community and cryptography practitioners: Let us know what you think of this approach to signing. You can review the preliminary implementation across the various repositories in the OpenPubkey GitHub organization. Feel free to open issues in the various repositories or join the discussion in the OpenSSF community.

We look forward to hearing your feedback and working together to improve the security of the software supply chain!

Learn more

- Questions about DOI signing? Check out the DOI signing FAQ.

- Use Docker Scout to improve your software supply chain security.

- Implementation questions? Check out the code in the OpenPubkey GitHub organization.

- Questions about OpenPubkey? See the OpenPubkey FAQ.

- Have questions? The Docker community is here to help.

- New to Docker? Get started.

Stick Figures image library by Youri Tjang.